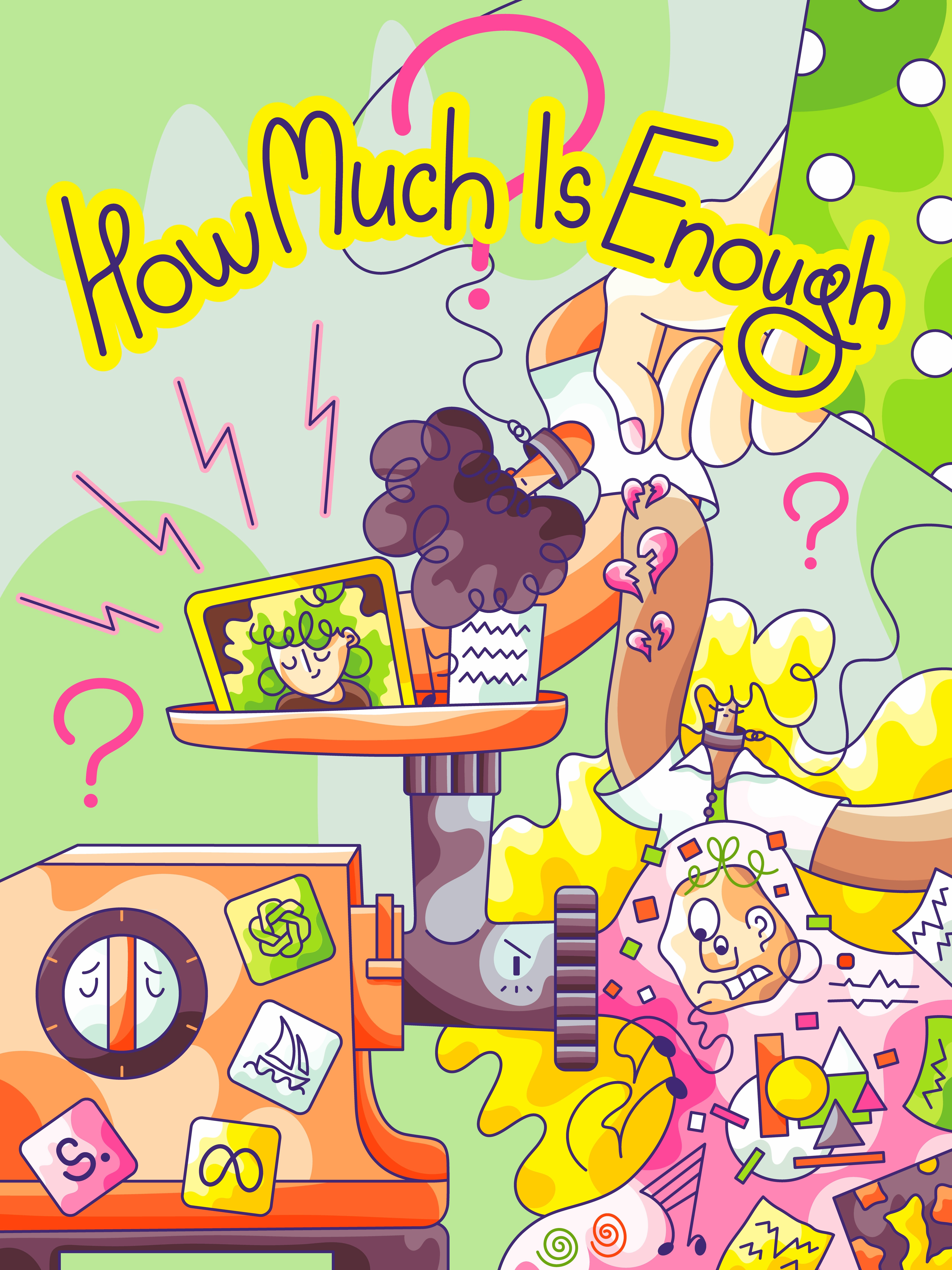

How Much Is Enough? Generative AI

Thoughts

•

How much is enough? Copyright violation? Disregard of consent? Children’s consent? Job elimination for the sake of productivity rooted in mediocrity? Taste degradation? I really want to know.

These days, being an artist and illustrator in San Francisco is a lonely and dystopian place to be. I’ve been living here since 2019 and observing how tech companies have been openly normalizing disrespect for my profession, stealing property, driving lay-offs, and eliminating agency from creative professionals to protect their rights and work.

As a mother, I’ve been seeing how unregulated AI is being pushed on the most vulnerable members of our society: children.

Meta, OpenAI, Midjourney, Google, Microsoft, Anthropic, Stability AI, DeviantArt… The list goes on.

In June 2024, Meta made a decision to remove the possibility for users based in the US to opt out of having their public data used for training AI models. A year later, a federal court hearing concluded that Meta can continue training its AI models using publicly available data, including copyrighted works.

Nausea, anyone?

I think I have a better word: abuse. Cambridge dictionary offers a variety of definitions, one of them being:

a situation in which a person uses something in a bad or wrong way, especially for their own advantage or pleasure

From my personal experience and years of therapy, I will also add control, power imbalance, and gaslighting. The court may call it “fair use”, but for those of us who are at the core of this reality, it feels like a royalty-free exploitation.

No consequences attached. Just because they can.

One thing I’ve learnt after six years of living in this city is that most tech CEOs don’t care about anything but profits. They market ethics based on lies, and they can get away with it because they own the marketing channels. Whoever has the fattest wallet, sets the music. Is that a surprise? Not really. Not to me at least.

What is new to me, though, is that now I know they don’t care about law, too. Indulging in sports of getting advantage of legal loopholes. Looking for ways to screw the very users whose work supports the heartbeat of their products.

Leading by example, huh?

In parallel to this fierce fight for legal copyright neglect, San Francisco started seeing a blooming garden of AI-generated visuals. From company logos and blog banners to food truck designs and children’s education materials. Unimaginatively basic, disturbingly broken, and soulless.

But that doesn’t matter. Over the past couple of years I’ve learnt that a lot of people don’t notice those “AI hallucinations”. When shapes overlap in all the wrong places, weird shadows, fingers resemble soba noodles, little random bits and pieces sprinkled all over images like confetti. Looking messy and, well, cheap.

And as much as my eyes want to melt out of the eye sockets when I look at those results, my eyes seem to be more of an exception. Professionally trained to notice every minor detail, and getting paid to make sure nothing like this sees the light of day.

Well, that was before 2022.

The 2025 narrative focuses on even higher speed and comprehensive adoption of AI tools regardless of the purpose. Those of us who have experience working in-house have seen it first-hand.

From 2021 to beginning of this year I worked as a Senior Illustrator in San Francisco based tech company. I was hired to create illustration-focused branding to deepen emotional connection between users and product. The first two years I drew everything by hand. The second two years I compromised my ethics and the quality of my work to keep the job and continue to provide for my child.

It’s not just my reality, it’s the reality for many designers and creatives working in tech. Though as for illustrators, at this point, there aren’t many left. Over the past two years, there have been “quiet” eliminations of entire illustration teams in favor of training LLMs on years of their work.

As bad as it sounds for creative professionals, for higher-ups, it all comes down to business. Output. How much money they can save, preferably in salaries. Fear to be that one tech visionary who’s the last to jump on AI train.

It’s nothing personal, just numbers. Generative AI makes the number of finished assets produced per hour significantly higher. That’s what’s good for business. Ethics and quality are not part of this metrics.

“But Oila, quality should be good for business, no?”

I believe so, too! In fact, I believe in many things.

I believe that technological progress should be happening responsibly. I believe it should be legally regulated from the start. There’s a common theme in Silicon Valley community that early regulation of new technology is bad for innovation.

Tech bros believe that regulation slows progress.

I don’t believe in progress at the expense of someone else’s livelihoods. I don’t believe in progress based on theft.

If you want to use technology for prOgress, progrEss in areas where it can make a truly positive impact. Climate change? Cancer treatments? Pollution? The irony is not lost on me: it feels weird talking about AI helping reduce negative environmental impact while making a significant negative impact by, well, existing.

From where I’m sitting, through, the most widespread use case for AI today is still content creation. But not the kind that brings genuine creativity, connection, or meaning.

It’s on a surface level, doing work that doesn’t matter. Shallow. Unoriginal. Misleading. Killing authenticity, creativity, and humanity along the way.

And what about taste?

I’m genuinely concerned about how this ever-growing widespread adoption of Generative AI is going to significantly distort how we perceive visual information. I’m not talking about the subjectivity of taste here where one can find something beautiful while another thinks it’s ugly.

I’m talking about how the more we see these AI hallucinations repeatedly, the more we see AI enhanced images and videos (which are apparently becoming a thing on YouTube), the more our eyes stop trying to resist and accept for these outputs to be the new bar for normality, artistic quality, and emotional resonance.

(I’m sure my friends in literature and music can relate).

As a mother, I’m even more worried about children. When my five-year-old started bringing home AI-generated school sheets and coloring pages, my faith in humanity disappeared almost completely.

I want to clarify here that I have no resentment towards schools and daycares. One of my future essays will be about how much is enough corruption in educational sector. Till it’s solved, I want them to have as many resources as they can get.

(If you’re reading this and you work in education or a related cause, feel free to contact me. I’m always happy to help if I can).

My problem is with exposing the little ones to AI-generated results. They don’t have a few decades of AI-free experience to have any framework for comparison. In fact, they will never have these few decades of AI-free experience.

Their critical thinking skills, analytical skills, and taking authority to question content they’re given skills are still in development.

Their taste is in development, too. I completely understand that the kids of today are facing their own generational challenges, but when it comes to AI-generated content, I want them to have a choice.

Especially when it comes to a technology that has been around for five minutes and is already sinking in lawsuits.

As a mother, I want to have a choice. This regular exposure to Generative AI aggressively interferes with my parenting decisions. I don’t want for my son to be confused by AI hallucinations while he’s still confused and learning about other things that are actually real.

I find it very disturbing and scary.

I AM LIVID WHEN I THINK ABOUT AI MODELS BEING TRAINED ON CHILD SEXUAL ABUSE MATERIALS.

IF COPYRIGHT IS NOT A GOOD ENOUGH REASON TO START REGULATING THE FUTURE OF GENERATIVE AI, THIS ABSOLUTELY SHOULD BE!

I’M A PISSED OFF MOM, AND I DEMAND FOR TECH COMPANIES TO BE PUT ON A LEASH! I DEMAND IT FOR OUR CHILDREN, THEIR SAFETY AND FUTURE!

HOW MUCH IS ENOUGH GENERATIVE AI?! I REALLY WANT TO KNOW.

I also want to know how and when creative professionals will finally be compensated for this mass exploitation.

When tech representatives in San Francisco are asked about their advice for creative community, they get nervous and babble some bullshit about dying like phoenixes and being reborn as creative generalists who use AI tools to help them become absolute creative unicorns.

In realities of corporate day-to-day workload though it means doing everything instead of thinking across disciplines and bringing strategic insight. A creative generalist in San Francisco is an entire creative department shrunk to the size of one person.

Illustro-graphico-ui-animato-brando-videographo-composer. Very high in speed, and equally shitty at everything. Constantly living on the edge of a nervous breakdown, under the fear of being let go.

How much is enough Generative AI, again?

Well, clearly not enough. In my 34 years of existence, I haven’t seen anything being pushed on so aggressively like tech bros are pushing AI.

I feel like, at this point, AI is dripping out of my ears, getting stuffed in my throat, being glued to my eyes.

If I were to continue this string of metaphors, I would also say I’m getting fucked by AI without consent and without getting paid for it, but that would make for a really dark and harsh metaphor to use.

Or would it?

How much is enough Generative AI? Mm?

Disclaimer.

This essay is my personal take on Generative AI. My attitude is shaped by my creative profession, my personal experiences with this technology and companies who continue to develop and use it. It’s shaped by 6 years of me living in San Francisco and 13 years working in tech. It’s shaped by my role as a mother. It’s also shaped by my personal system of values and beliefs. If your beliefs and experiences are different, I respect it.

Thank you for reading!

Share

Copy link